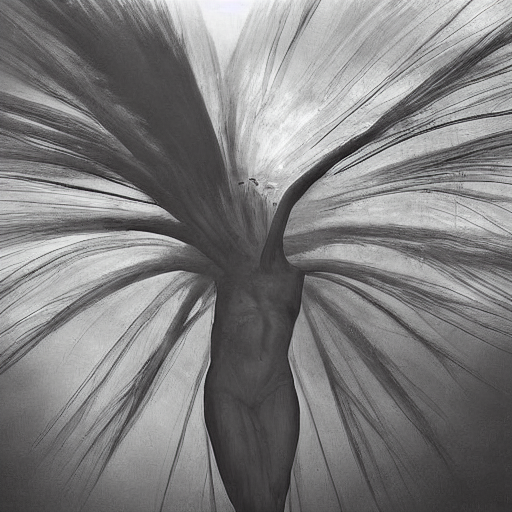

Biased Dreams

monumental, dramatic, atmospheric, transcendent

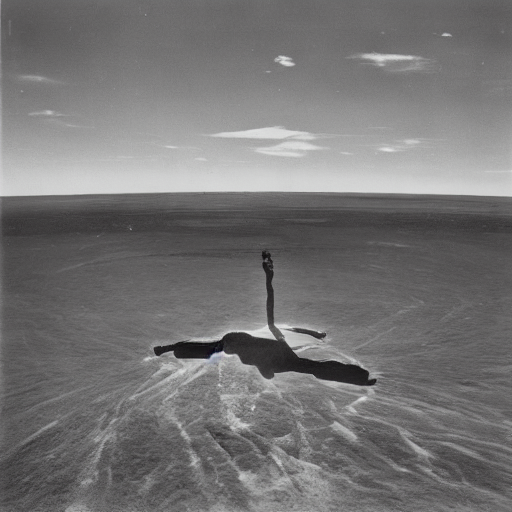

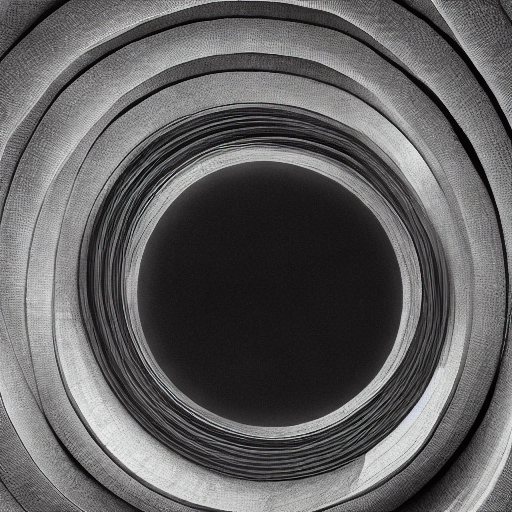

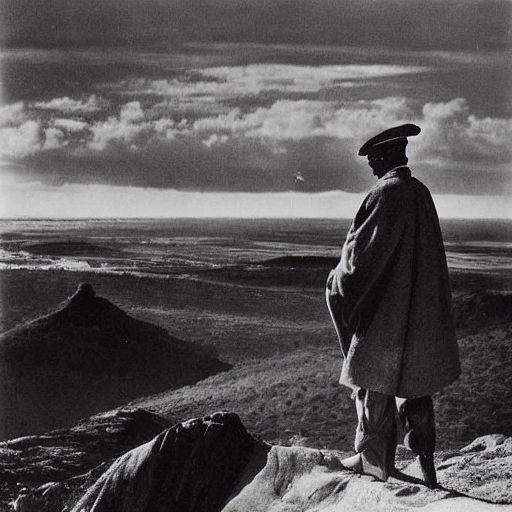

Mostly diffusion models try to be as unbiased as possible, letting you recreate any image. Prompts are mostly hyper specific, guiding the model to create the image that you want. Here we reverse both: bias the model and make the prompt completely generic, to see where the model wants to go. The model fills in something you couldn't have described, and couldn't reproduce by asking for it directly.

What you're looking at is latent space photography. The model has been fine-tuned on a tiny dataset of eight images, with no regularization, so it's been permanently biased. Not totally broken, just nudged. And then you point it at four vague adjectives and let it go.

Same model, same prompt, same parameters, only the seed changes.

Recipe

Base model: Stable Diffusion v1.5. Training method: Dreambooth, full fine-tune — not LoRA. Eight images of a single subject, resized to 512×512.

The important part: no prior preservation loss. Without class regularization, nothing pulls the model back toward its original distribution. It just drifts.

accelerate launch train_dreambooth.py \

--pretrained_model_name_or_path='runwayml/stable-diffusion-v1-5' \

--instance_data_dir='training_images/' \

--output_dir='models/dreamy' \

--instance_prompt='a photo of sks person' \

--resolution=512 \

--train_batch_size=1 \

--train_text_encoder \

--use_8bit_adam \

--learning_rate=2e-6 \

--lr_scheduler='constant' \

--lr_warmup_steps=0 \

--max_train_steps=1200 \

--mixed_precision=fp16Learning rate and step count define a narrow corridor:

- 2e-6 / 1200 steps — sweet spot. Prompts still work, but they carry the training data as latent texture.

- 2e-6 / 1600 steps — too far. Model forgets language.

- 4e-6 / 1600 steps — cooked. Mode collapse. Every output is a smear of the training set.

Prompt

The prompt matters, but not the way you'd think. We tested hundreds: detailed scene descriptions, single words, arbitrary strings, empty prompts. Specificity kills it. Detail tokens ("hyper detailed", "filigree", "exacting") produced technically richer outputs that were less interesting in every case. The model needs room to drift.

What worked for us was four adjectives:

monumental, dramatic, atmospheric, transcendent

Just vibes, basically. No nouns, no scene — just a direction. The model fills in the rest from its own biased priors.

Guidance scale: 3.0 — really low for SD work. At standard guidance (7–10), the model tries to do what you asked. At 3.0, it mostly ignores you. That's what we want.

Steps: 50. We tried 70 and it got overwrought. Sampler: DDIM — deterministic, so you get the same image for the same seed. Useful for comparing runs.

pipe = StableDiffusionPipeline.from_pretrained(

model_path,

scheduler=DDIMScheduler(

beta_start=0.00085,

beta_end=0.012,

beta_schedule="scaled_linear",

clip_sample=False,

set_alpha_to_one=False,

),

torch_dtype=torch.float16,

).to("cuda")

images = pipe(

prompt,

num_inference_steps=50,

guidance_scale=3.0,

generator=torch.Generator("cuda").manual_seed(seed),

).imagesWhat We Tried

You also need style — we swept 80+ artist references. Tried collaborative prompts between artists. Ran overtrained models at higher learning rates. Tried minimal prompts — single words, frame numbers, empty strings.

The original combination was always better, and not by a small margin. I don't have a great theory for why. Something about this specific collision of biased latent space and vague adjectives just works.

More specific prompts lost the strangeness. More training lost everything.

Upscale

512×512 is interesting but lacks texture for print. We used SUPIR, a diffusion-based upscaler on an SDXL backbone, to bring them to 2048×2048.

SUPIR isn't interpolation — it hallucinates new detail guided by the source. At 4× it adds grain and texture that feel like they belong.

# SUPIR upscale: 512 → 2048

# Model: SUPIR-v0Q (Quality mode)

# Backbone: JuggernautXL v9

edm_steps = 30

s_cfg = 3.0 # guidance ramp endpoint

s_stage1 = -1 # auto

control_scale = 0.9 # high fidelity to source

color_fix = "Wavelet"

# Positive: "sharp textures, fine grain, rich color depth"

# Negative: "blurry, low quality, jpeg artifacts"

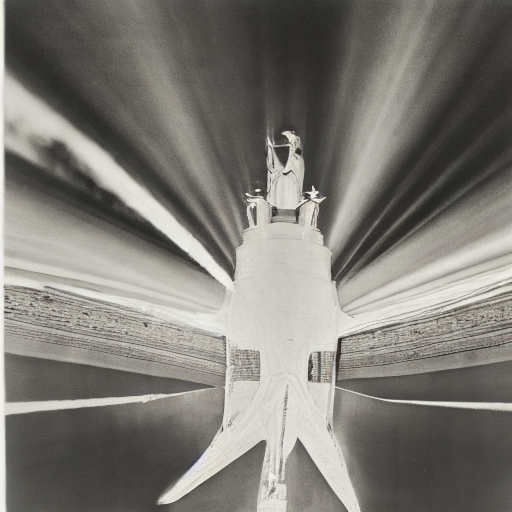

# ~3 minutes per image on a single GPU512×512 source:

2048×2048 SUPIR upscale:

Pipeline

Every image in this post was generated at 512×512 in about 12 seconds, then upscaled in about 3 minutes. The whole thing runs on a single consumer GPU — a 3090 next to my water heater.